Figma to Native

Claude + xCode

Year

2026

Website

www.xapien.com

My Role

Principal Product Designer · AI-Assisted Prototyping

Product

Objective

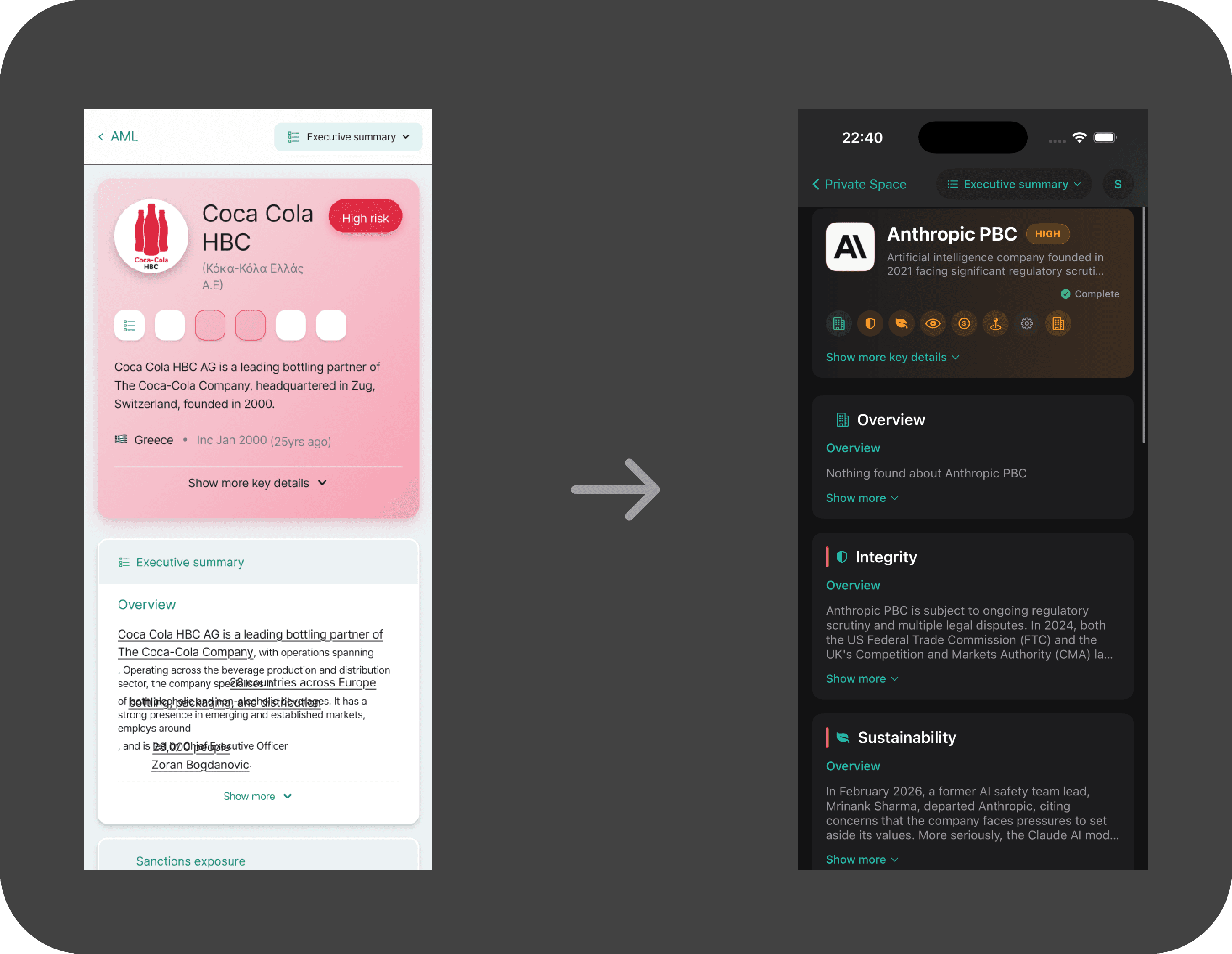

As part of exploring the next evolution of Xapien’s platform, I set out to test how far AI-assisted development could compress the distance between product design and native execution.

Rather than creating another high-fidelity prototype in Figma, I rebuilt key parts of the platform in SwiftUI using Claude to orchestrate code generation, architecture decisions, and iteration cycles.

The goal was to evaluate whether a designer-led workflow could meaningfully produce a functional, device-tested native experience, and uncover new interaction patterns only possible on iOS.

Process

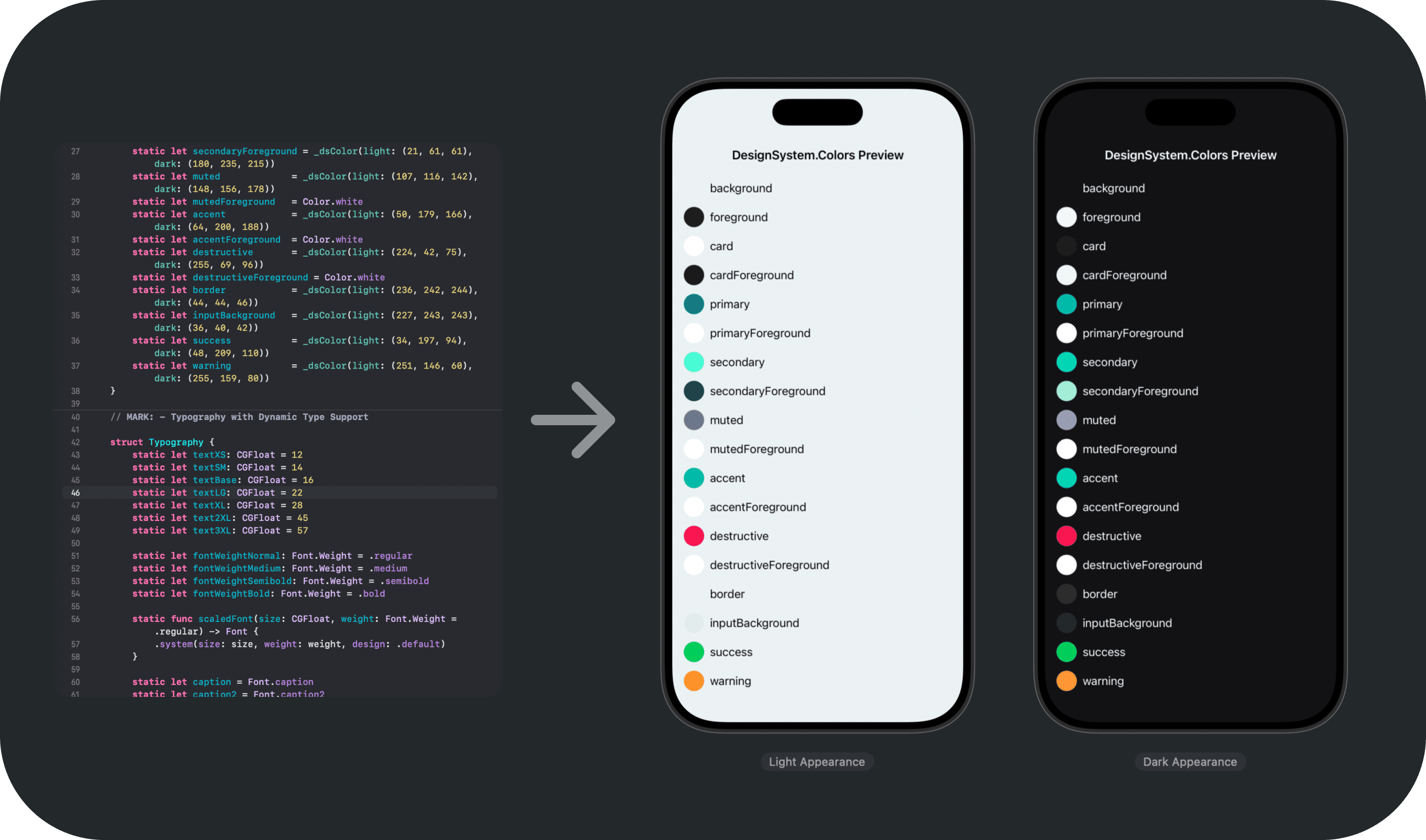

1. Design System Extraction

I began by feeding our Figma components into Claude Code — spacing, typography scales, colour variables, layout primitives, and state logic — ensuring the system could translate cleanly into SwiftUI structures.

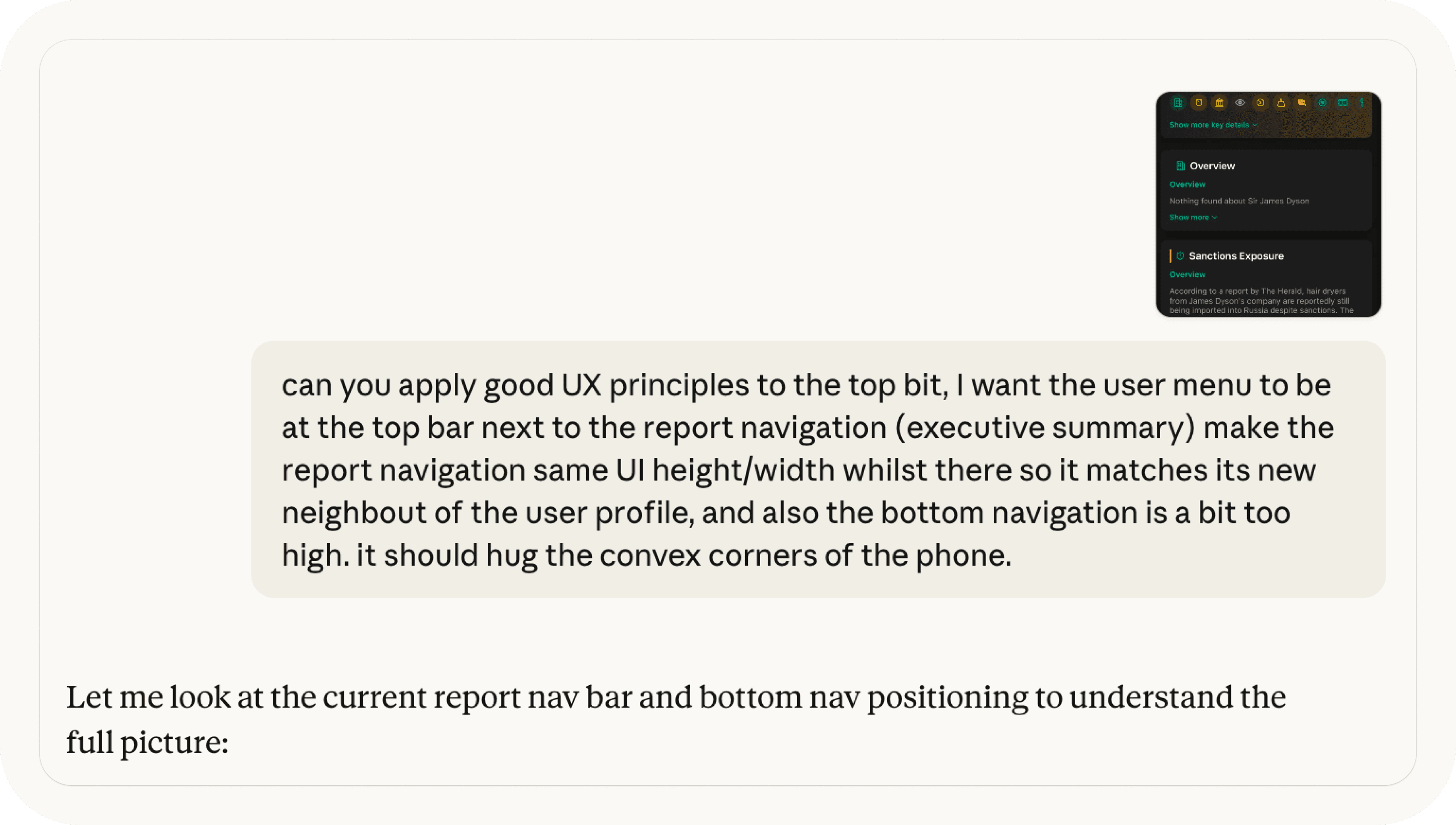

2. Prompt-Driven Architecture

Using Claude, I structured prompts around view hierarchy, navigation stacks, state management and data modelling rather as well as asking for surface-level UI code. This required clear articulation of interaction intent and system behaviour.

3. Iterative Native Build

SwiftUI views were generated, refined, and restructured inside Xcode. I corrected user flow assumptions, optimised layout constraints, and aligned component behaviour with product intent using our design system as well as B2C level of UI craft as guidance

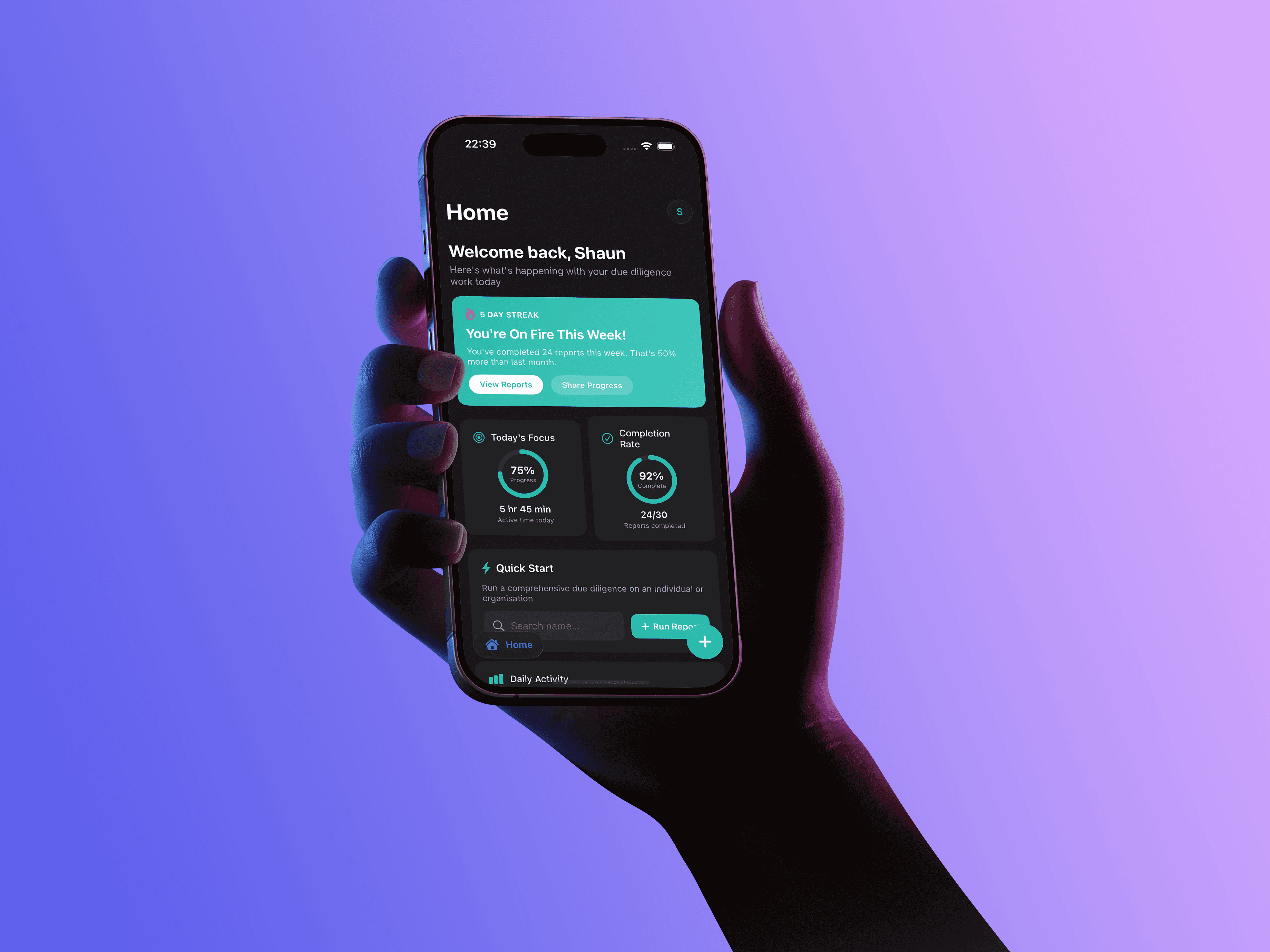

4. On-Device Testing

The prototype was deployed to simulator and physical device to test gesture handling, scroll behaviour, typography scaling, and performance under real constraints.

This process reframed AI not as an autopilot, but as a rapid iteration engine directed by product judgement.

Outcome

This exploration proved that AI-assisted development meaningfully reduces the gap between product thinking and functional native output.

While not production-ready, the prototype surfaced new mobile-first interaction opportunities and exposed where architectural discipline remains critical.

More importantly, it demonstrated that designers can now operate closer to execution, validating platform-native ideas without waiting for full engineering allocation.

AI did not replace judgement; it amplified it.

Standout Features

Designer-led native prototyping workflow

SwiftUI architecture generated and refined via structured prompting

Component system translated from Figma tokens to reusable Swift views

Navigation and state management logic defined at prompt level

On-device validation of gesture-first UX patterns

Rapid iteration cycles measured in minutes, not sprint cycles

Research + Personas

This initiative was exploratory rather than research-led.

The objective was to understand tooling capability, interaction constraints, and system translation fidelity between design and native execution.

MarComs

Presented internally as a strategic R&D case study during our 2026 Hackathon on AI-assisted product development.